Helios Interactive worked closely with BeMyApp and Intel to provide a conducive enviroment for it’s 24 hour hackathon in San Francisco. The goal of the Hackathon was to engage the development community with the Intel Perceptual Computing SDK and camera. The event hosted over 60 participants and produced more than 20 hacks using this new device. Everything from javascript, c++, Unity3D, and Processing were used to create applications for the hackathon.

The camera above is what allowed it all to happen. It’s unofficial nickname is the PerC. It is a close range input device that allows for: face position/feature tracking, voice recognition, finger/hand tracking, finger poses. Right now the camera is simply placed on the top of a laptop of monitor. The technology will become much smaller and eventually integrated into laptops and possible mobile phones and tablets. So it’s safe to say that it’s similar to the Microsoft Kinect but there are some key differences. Entries for the Perceptual Computing Challenge have just closed! Intel Perceptual Challenge and we can’t wait to see how our apps perform and what other folks are also able to create.

| Kinect | PerC |

|---|---|

| Works best indoors in a “living room” setting. | Works best in any indoor setting. |

| Features full body skeleton tracking. | Full hand and finger tip tracking (+ few gestures like swipe). |

| Voice recognition capabilities. | Voice recognition capabilities. |

| Works best 3-9 feet from the camera. | Works best up to 3 feet from the camera. |

The Hackathon Winners

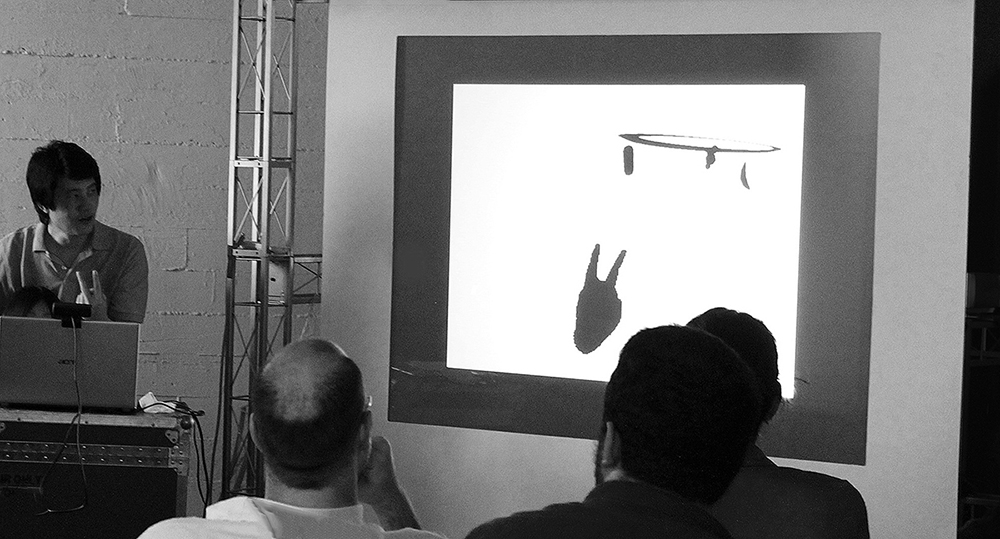

My Pet Shadow built using Unity3D with Hand Tracking + Voice Recognition. A stylistic game that allowed players to hold their own “pet shadow” in their hands and tell it to do tricks or feed it.

Motion Map built using c++ and demoed with Javascript implementing Finger Tracking. A Chrome extension was created that allowed for use of the SDK inside the browser to control Google Maps. Javascript is currently not supported by the SDK.

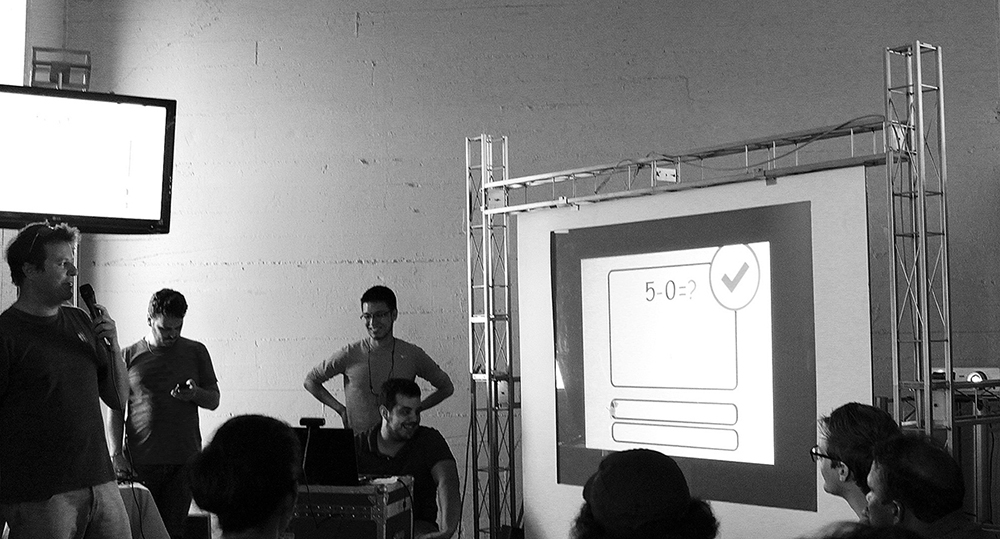

Learning with Luca built with Unity3D and leveraging finger poses and voice recognition. An eductational multiplayer game where users could learn simple arithmetic using finger poses and voice recognition to select flashcards.

Space Palette is a continuing interactive art installation by Tim Thimpson was adapted to use multiple PerC cameras at once instead of a kinect.

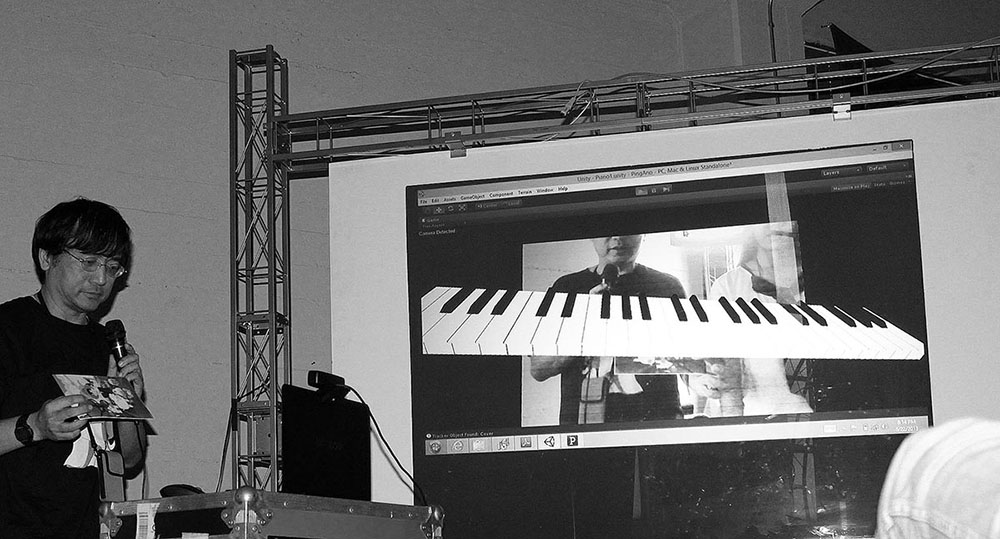

AR Piano uses an AR target marker, which users can place a virtual piano anywhere in the camera feed and play notes using their fingers.